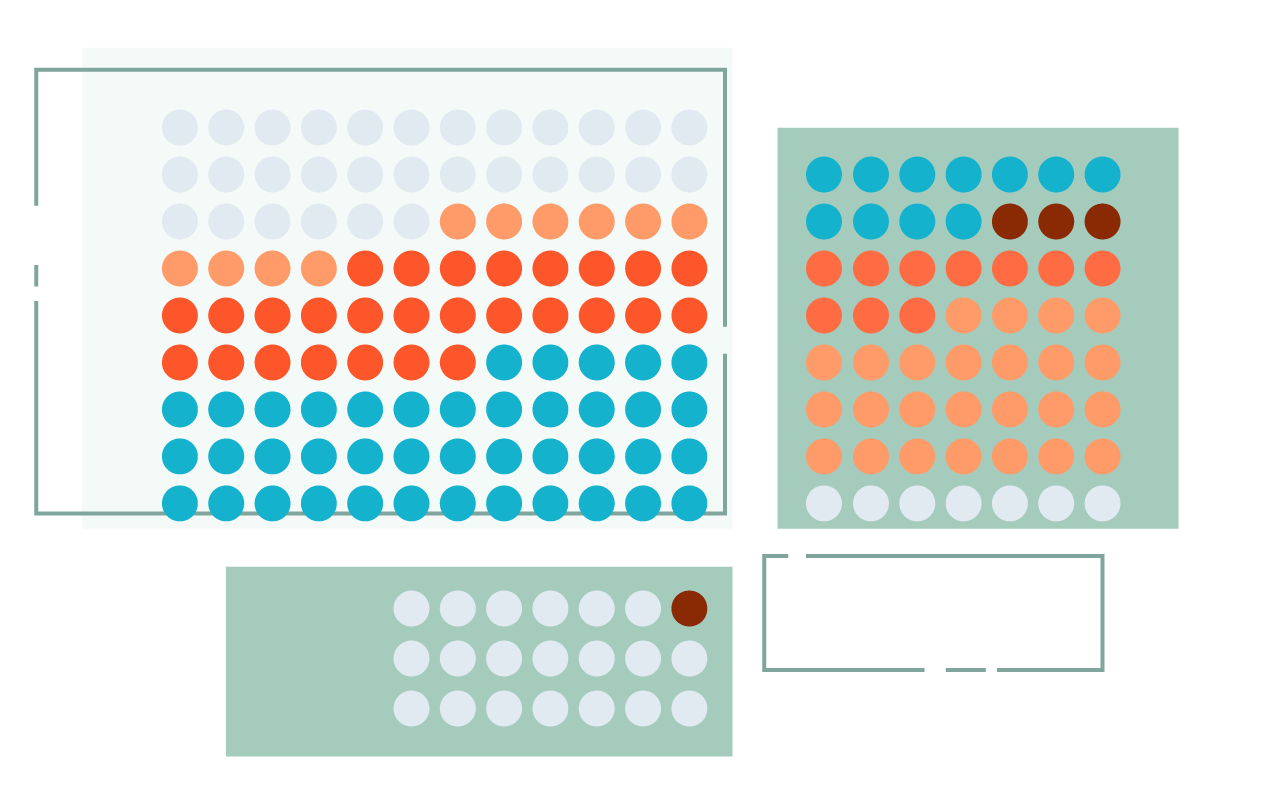

A key challenge in developing and deploying responsible Machine Learning (ML) systems is understanding their performance across a wide range of inputs.

Using WIT, you can test performance in hypothetical situations, analyze the importance of different data features, and visualize model behavior across multiple models and subsets of input data, and for different ML fairness metrics.

Model probing, from within any workflow

Platforms and Integrations

Compatible models

and frameworks

TF Estimators

Models served by TF serving

Cloud AI Platform Models

Models that can be wrapped in a python function

Supported data and task types

Binary classification

Multi-class classification

Regression

Tabular, Image, Text data

Ask and answer questions about models, features, and data points

What’s the latest

CODE

Contribute to the What-If Tool

The What-If Tool is open to anyone who wants to help develop and improve it!

UPDATES

Latest updates to the What-If Tool

New features, updates, and improvements to the What-If Tool.

RESEARCH

Systems Paper at IEEE VAST ‘19

Read about what went into the What-If Tool in our systems papers, presented at IEEE VAST ‘19.

ARTICLE

Playing with AI Fairness

The What-If Tool lets you try on five different types of fairness. What do they mean?